On the most recent episode of Opening Arguments, the terrific legal and current affairs podcast that also happens to have excellent taste in WordPress themes, host Andrew Torrez posed an interesting statistical question (around 56:00). Are Supreme Court decisions growing increasingly polarized?

My thesis is this, and the first half is pretty well established in the law review literature: that Supreme Court cases throughout our history have had a reverse-normal distribution. That is, they have predominantly been 9-0 decisions and tailing off toward 5-4. […] The mode of Supreme Court decisions has been 9-0. My thesis is that the distribution of Supreme Court decisions can be mathematically shown to have changed demonstrably beginning in the 1980s, and then beginning sharply with the Roberts court in 2007.

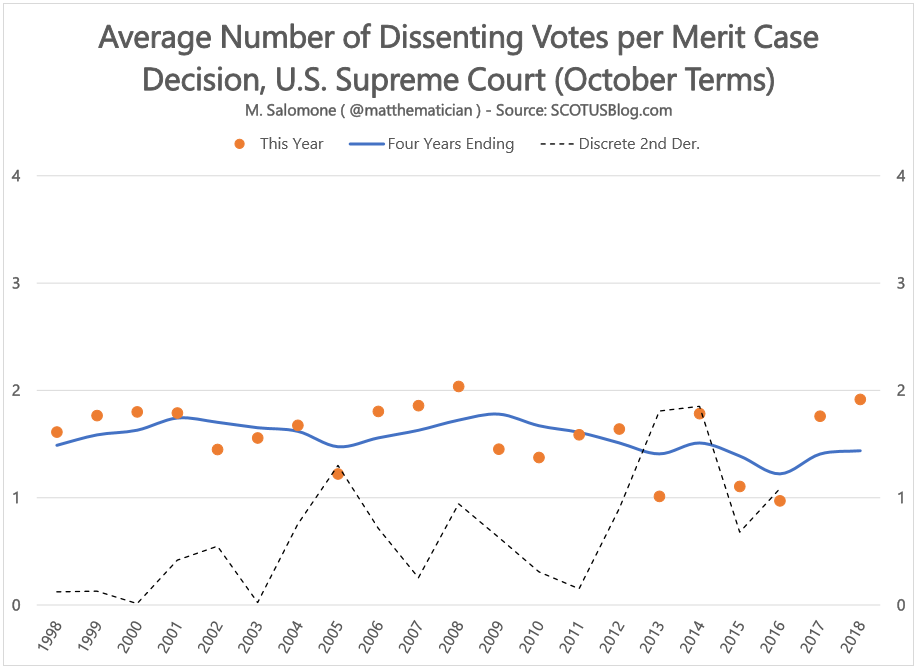

Using the “stat pack” reports compiled on SCOTUSBlog, which go back to 1995, I claim that the answer is no. While it remains true that unanimous 9-0 decisions (counting both those with and without dissenting opinions) is the modal outcome in cases decided on the merits each year since 1995, I find that the mean number of dissenting votes on merit cases has not (yet) markedly increased in the Roberts court.

According to these data, the most polarized year in the past twenty was 2008. This year had the narrowest split between number of unanimous cases (26) and number of 5-4 decisions (23) and was the only year in this data in which the average number of dissenting votes exceeded two (2.04). By contrast, lost in the political sturm und drang of October 2016 was the fact that the Supreme Court term was the most unanimous of these past twenty, with 41 unanimous decisions and only seven 5-4 decisions contributing to an average of less than one dissenting vote per decision (0.97).

Beyond that, the trend since 2007 has not hockey-sticked either up or down, neither toward unanimity nor toward polarization. So if there is a story in the second half of this data that is different than the first, it could be found in the “zig-zagging.”

A Heavy Foot on the Pedals

Prior to 2007 in this data, the levels of unanimity in the court appeared fairly stable year over year. There were periods of gradual and probably statistically insignificant increases in polarization (1998-2001, 2002-2004, and 2006-2008) interrupted only by two sudden drops toward unanimity in 2002 and 2005. After 2008, however, the steadiness seems to evaporate and we see larger up-down-up-down swings in unanimity (2012-2013-2014-2015 being the most notable).

So perhaps the notion of unanimity in the Court is not disappearing but is becoming more fragile. One way to measure these “changes of direction” is to estimate the magnitude of the second derivative of the average number of dissenting votes. This captures the zig-zagginess of the data: it is zero when the data increases or decreases at a constant amount rate (i.e., in a straight line) and is largest in moments of most reversal in direction, such as when the sharp increase from 2013-2014 was followed by a sharp decrease from 2014-2015.

I used a five-point estimate of the second derivative, [latex]f”(x) \approx \displaystyle\frac{-f_{n-2} + 16f_{n-1}-30f_n+16f_{n+1}-f_{n+2}}{12}[/latex], to measure this volatility. (Since the data extended back to 1995, there was enough information for a centered five-point estimate for each year 1998 through 2016 shown on the chart.)

The dashed line on the chart shows the magnitude of this second derivative, and to my eye there is the beginning of an increase in this quantity under the Roberts court. The swings in unanimity from year to year appear to have become larger and more frequent. It is now less likely that recent levels of unanimity or polarization are predictive of future levels.

To use a metaphor, if we imagine driving in a car whose gas pedal accelerates the court toward polarization and whose brake pedal slows it toward unanimity. The 00s, when this second derivative was smaller, was a time of light feet on these pedals, neither accelerating rapidly toward or away. Since 2008, by contrast, there has been a heavy foot on these pedals and the direction of movement toward or away from unanimity has changed frequently and swiftly. Time will tell in the next several years whether this general increase continues. Court watchers and fans of either unanimous or partisan courts are advised, if this trend continues, to invest in protective neck collars against whiplash.

What’s next?

This was a fun short exploration. I’d be interested in whether the longer historical perspective that Torrez suggests is borne out by data extending further back. It’d also be nice to go the next step toward his distributional hypothesis: for example, by computing the skewness of these distributions over time to assess for a shift from right-tailed (unanimity bias) to left-tailed (polarized bias).

UPDATE (9/4/19):

The variance and skewness of the dissenting vote distributions don’t tell a story dissimilar to the above:

The variance is a measurement of the dispersion or “spread” of each distribution, and is lowest when the data is most clustered around its mean. Here it is lowest in 2016 when the unanimous 9-0 decisions dominated the court (41 decisions had no dissenting votes; only 28 decisions were not unanimous this year). There doesn’t appear to be much to learn from where variance was highest here — these would be the years in which there was the most variety in court splits ranging from 9-0 to 5-4.

There is perhaps a more interesting story in the skewness here, however. Skewness is a statistical quantity that most closely addresses Torrez’s hypothesis about bias-reversal: it is zero for distributions that are symmetrical about their mean, positive for distributions with long right-tails (i.e. which are “heavier” to the left of their mean), and negative for distributions with long left-tails (“heavier” to the right of their mean).

In our distributions, unanimity (zero dissenting votes) is the leftmost outcome on the scale while maximal polarization (four dissenting votes) is the rightmost. So, when our distributions have positive skew they are biased more toward unanimity; when they have negative skew they are biased more toward polarization. Put another way, positive skew means that more than half the data falls below the mean; negative skew means more than half falls above the mean.

By this measure, the only year in the past twenty with a bias toward polarization (negative skew) was 2008 — also the year of the highest average dissensions per case in this data. But 2018 was a very close second! This might get at the feeling that court watchers have about last year’s decisions: 2018’s vote split distribution came closest to a bias more toward split decisions than toward unanimity than in any other year except 2008.

Of course, the caveat with all of this analysis is that it is statistically somewhat simplistic, and any one year on its own is not evidence of a trend. But much as the recent inversion of the bond yield curve may portend recession on the horizon, if last year’s drop in skewness is a hint of things to come, it’s possible that we may be on the cusp of an era of Court decisions biased more toward splits than toward unanimity. Let’s see what we can learn from this fall’s season.

Any suggestions or tips? Give me a shout on Twitter.

Download

- SCOTUS.XLSX (Data file, including chart shown above, in Excel format)

If you want a larger dataset, it might be useful to look at the Washington University Law supreme court database.

I’ll check it out, thanks!